The Threat Landscape in 2026

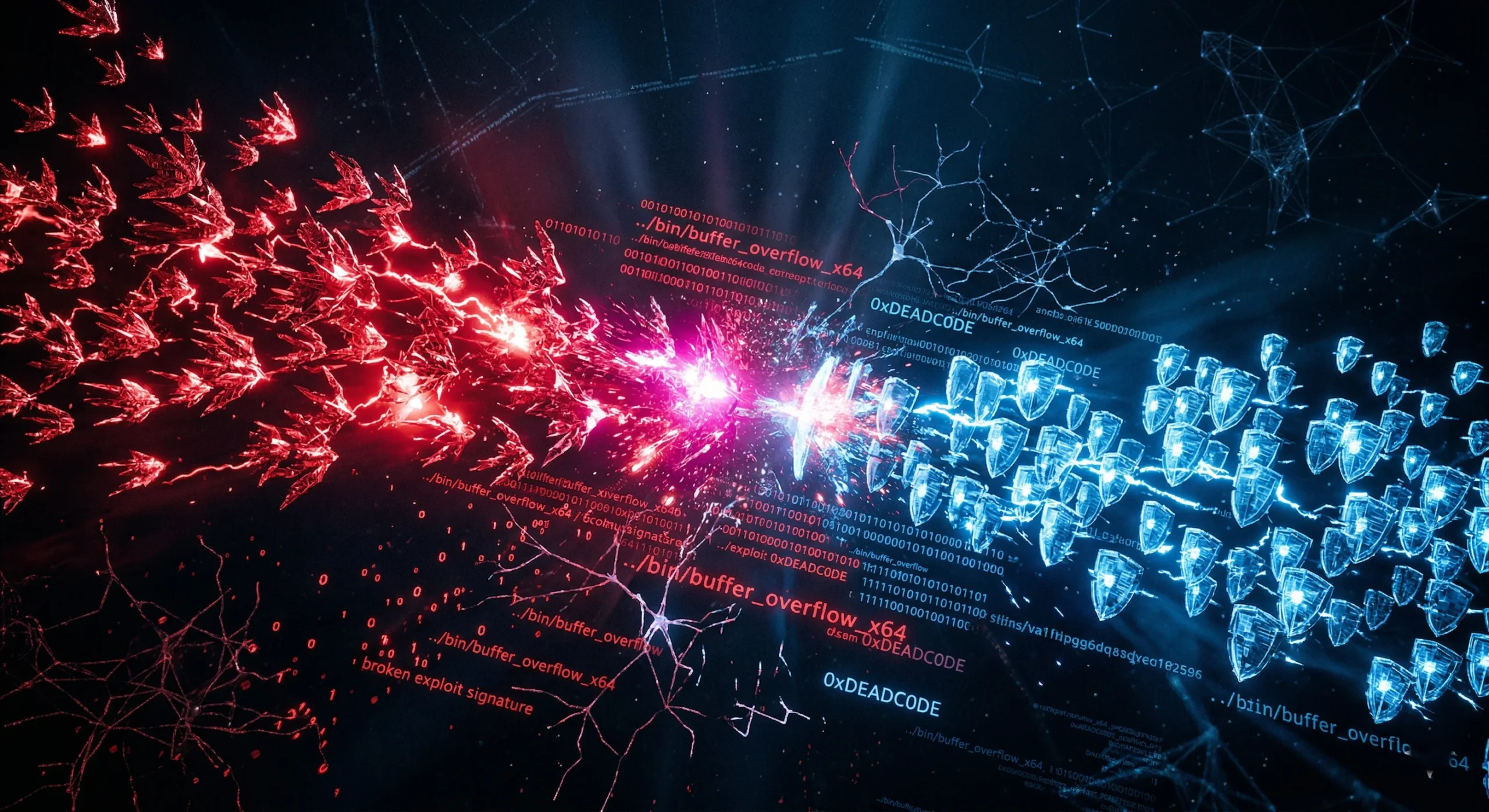

Cybersecurity in 2026 is not a cat-and-mouse game. It is a cat-and-cat game, where both sides have gone fully autonomous. Attackers now deploy AI-generated spear-phishing campaigns that produce personalized emails indistinguishable from those written by trusted colleagues. They use AI to scan for vulnerabilities at scale, test exploits automatically, and adapt tactics in real time based on what defenses they encounter.

Against this backdrop, the traditional reactive security posture is structurally inadequate. A security operations team reviewing alerts generated by yesterday’s attack patterns will always be behind an adversary using today’s AI tools. Gartner’s response — and the direction the entire industry is moving — is what they call preemptive cybersecurity: shifting the defensive posture from detection and response to prediction and prevention.

What Proactive AI Security Actually Looks Like

The practical manifestation of this shift involves several converging capabilities. Behavioral AI models establish baselines of normal activity for every user, device, and application in an environment, then flag deviations with high precision before they escalate into incidents. Autonomous threat-hunting agents continuously scan the attack surface for vulnerabilities at a speed and breadth no human team can match.

The concept of digital provenance — verifying the origin and integrity of software, data, and AI-generated content — is also gaining significant traction. In a world where AI can generate convincing code, documents, and communications at scale, the ability to cryptographically verify that a piece of software is what it claims to be becomes a foundational security requirement.

The Human Factor Has Not Gone Away

The most sophisticated AI security stack in the world does not protect an organization from an employee clicking a malicious link in a convincing AI-generated email. Social engineering remains the most reliable attack vector precisely because it exploits human psychology rather than technical vulnerabilities. The organizations with the strongest security postures combine AI-powered technical defenses with continuous, realistic security awareness programs — not the annual compliance checkbox training that most organizations still rely on.

“We are no longer defending against hackers. We are defending against hacker-trained AI systems that never sleep, never take weekends off, and learn from every failed attempt.”

Conclusion

The robot revolution is not coming. It is already on the warehouse floor, in the surgical suite, and moving through the fields. The organizations that treat physical AI as a distant future technology will spend the next five years catching up to competitors who treated it as a present-day operational decision. The machines are ready. The real question is whether the humans leading these organizations are.

Leave a Reply